The question then becomes which portion to use. Sampling implies we only use a portion of the data set. If you know that the data quality is high, you may be better off simply sampling the data to reduce the computational expense. However, big data infrastructure may not always be available and you may have to analyze data in-memory. The first of these scenarios is quite common: the entire space of big data analytics is dedicated to address these kinds of issues. For Statistica see this example and for in RapidMiner its called Normalization In any such situations, data type transformations are required. Similarly in the converse case, if the label attribute is polynomial (multiple categories), you cannot use a classification algorithm such as logistic regression, which only works with binomial (yes/no, true/false) type variables.

In this case you will need to transform the label attribute into a continuous variable. For example, if the target or label variable is categorical, then you cannot use regression or generalized linear models to predict it.

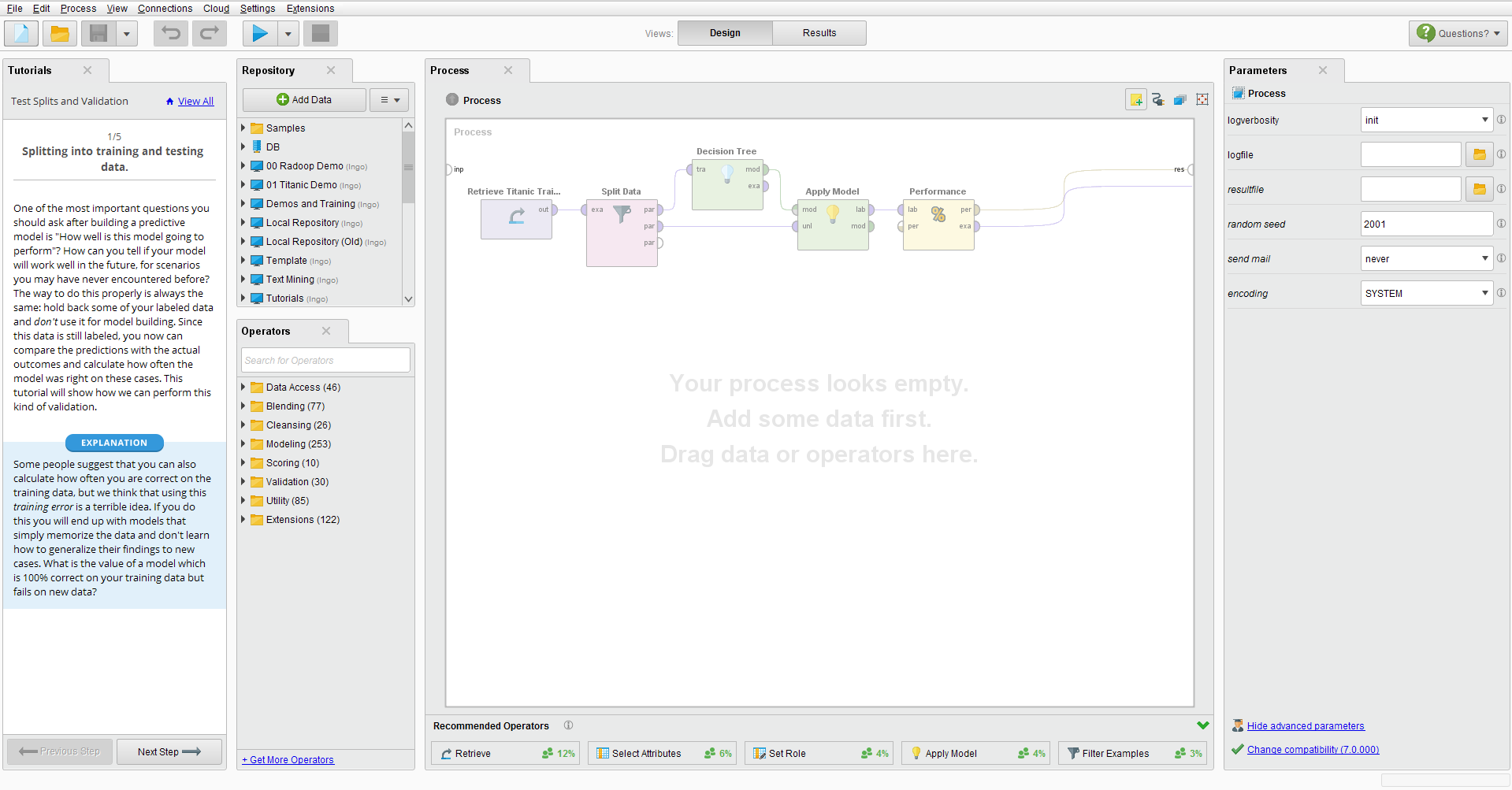

Data Transformation: Some algorithms do not work on the value types of certain attributes.For example, in Dell Statistica 12 you can do this by ‘ Standardisation‘ in the data tab, whereas in RapidMiner, it can be done by Normalization. Different software’s have different ways to achieve this task. This is easily achieved by dividing each attribute by its largest value, for instance. Normalization implies transforming all the attributes to a common range. In order to eliminate or minimize such bias, we must Normalize the data. If you have one attribute whose range spans millions (of dollars, for example) while another attribute is in a few tens, then the larger scale attribute will influence the outcome. Data Scaling: Some algorithms, such as k-nearest neighbors are sensitive to the scale of data.A target or label attribute is the dependent variable which is being predicted. Recall in this context, attributes are variables (columns in the data spreadsheet) and each row in this column is a data value. Below are listed few common instances where data preprocessing is required. These are required by the nature of available data and algorithms. There are usually several data preprocessing steps required before applying any machine learning algorithms to data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed